LED

Fleetingan LED must always use a resistor

This is because LEDs have what is called a “threshold voltage”. To put it simply, a little too much current and the LED burns out immediately, so the resistor is a protection.

We will therefore have to choose which resistance to use. For this, there is a mathematical formula to calculate the size of the resistor to use.

Rmin = (Ualim - Uled) / ImaxUnless you have some good leftovers from your physics class, I’m guessing it doesn’t get you too far. Small explanation:

- Rmin: Minimum resistance to use, expressed in ohms (Ω)

- Ualim: Power supply voltage, expressed in volts (V)

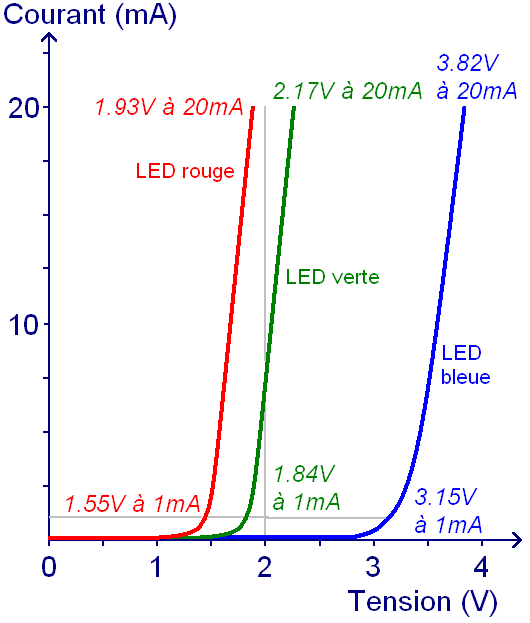

- Uled: LED threshold voltage, expressed in volts (V)

- Imax: The maximum intensity of the LED, expressed in amperes (A)

The LED has a maximum intensity of 20 mA (20 mA = 0.020 A) and a threshold voltage of 1.5 and 1.9 V.

Allumer une LED

- Référence externe : http://www.lafabriquediy.com/tutoriel/allumer-une-led-1-44/

Allumer une LED

En général une LED a besoin de 3 Volts et 30mA (milliampères) pour fonctionner.

Règle au doigt mouillé : R = (V-3) * 33

led-et-calcul-de-la-resistance-serie-0_1.png (530×623)

- Référence externe :

led-et-calcul-de-la-resistance-serie-0_1.png (530×623)

LED et calcul de la résistance série - Astuces Pratiques

- Référence externe : https://www.astuces-pratiques.fr/electronique/led-et-calcul-de-la-resistance-serie

LED et calcul de la résistance série - Astuces Pratiques

Lorsqu’une LED est passante, il s’établit à ses bornes une tension assez indépendante du courant

Or, le courant dans la résistance est égal à celui de la LED, c’est-à-dire 20mA.

LED circuit

- Référence externe : https://en.wikipedia.org/wiki/LED_circuit

LED circuit - Wikipedia

Typically, the forward voltage of an LED is between 1.8 and 3.3 volts. It varies by the color of the LED. A red LED typically drops around 1.7 to 2.0 volts, but since both voltage drop and light frequency increase with band gap, a blue LED may drop around 3 to 3.3 volts.

- R = (Vpower - Vled) / Iled

20 mA (0.020A) is common for many small LEDs

Then, as a rule of thumb, because the voltage of the LED is around 2 and the current can be 5mA without harm, I can always start with the resistance of value (V - 2) / 0.005 = (V -2) * 200.

Brancher une LED sur une pile - Astuces Pratiques

- Référence externe : https://www.astuces-pratiques.fr/electronique/brancher-une-led-sur-une-pile

Brancher une LED sur une pile - Astuces Pratiques

Il existe des montages électroniques spéciaux pour élever la tension d’une pile de 1,5V (1,6V au début quand elle est pleine, puis 1,2V vers la fin de vie de la pile). Ces montages s’appellent les “voleurs de Joule” (Joule thief en anglais) : ils exploitent ce qui reste comme énergie dans la pile quand elle est “vide”, c’est à dire non utilisable par un autre appareil.

tension de seuil de la LED (1,8V pour les LED rouges, orange, ambre et 3V pour les LED blanches, vertes forte luminosité et bleues)